Sensor fusion for positioning solutions

Enabling autonomy through sensor fusion.

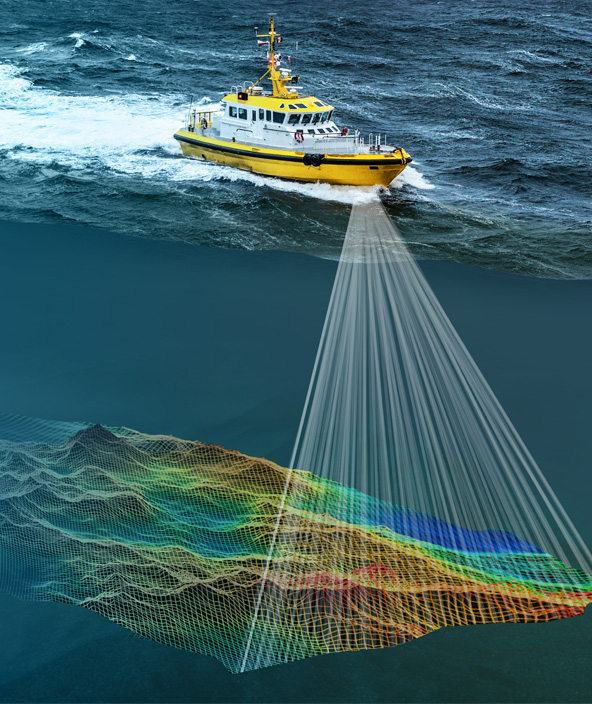

Sensor fusion, at its most basic level, is about combining different sensor measurements together, whether that be GNSS, inertial, photogrammetry, LiDAR/RADAR and others.

We'll explain how this is achieved and the ways it enables autonomous applications.

Overview

Why sensor fusion?

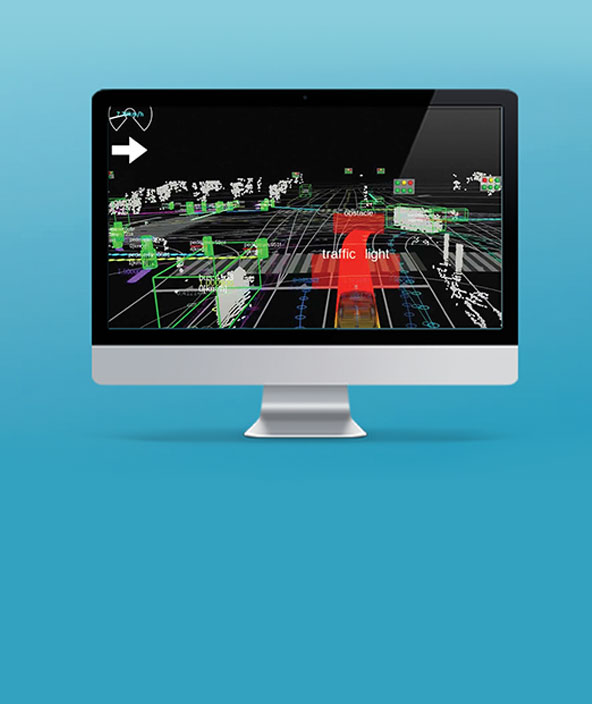

Sensor fusion involves the complicated process of using algorithms to fuse together measurements from many different sensors. The result is a more accurate, reliable and safe output position that we can use in autonomous applications.

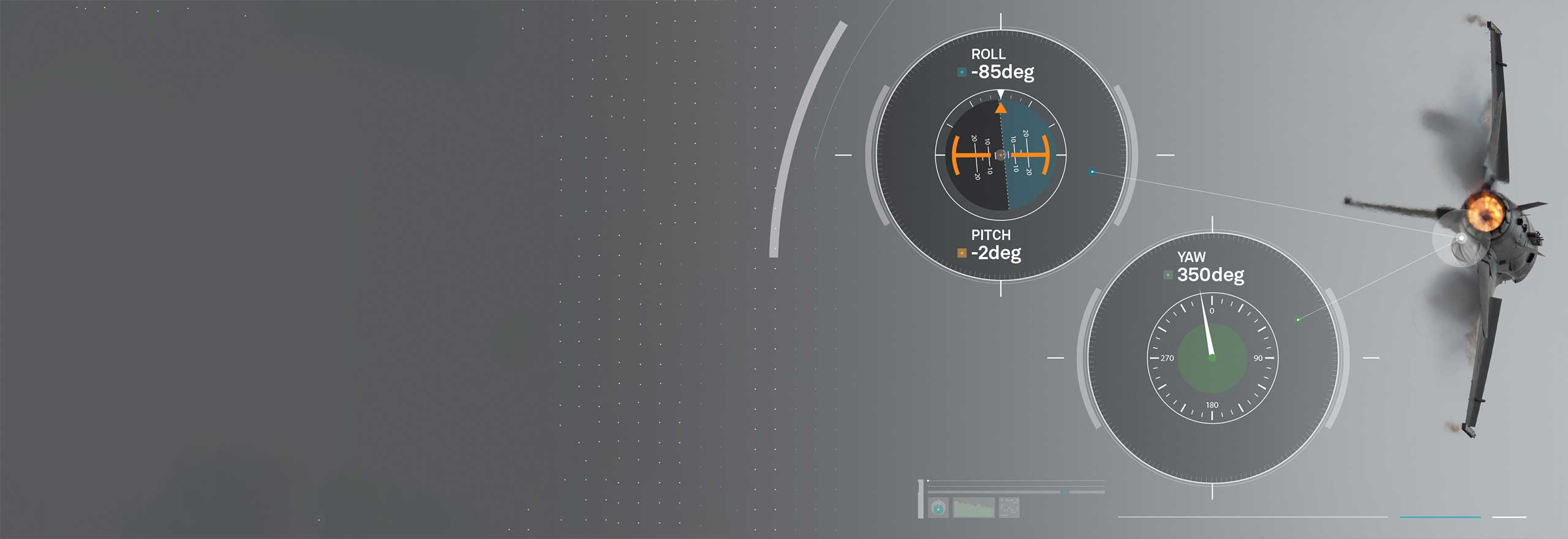

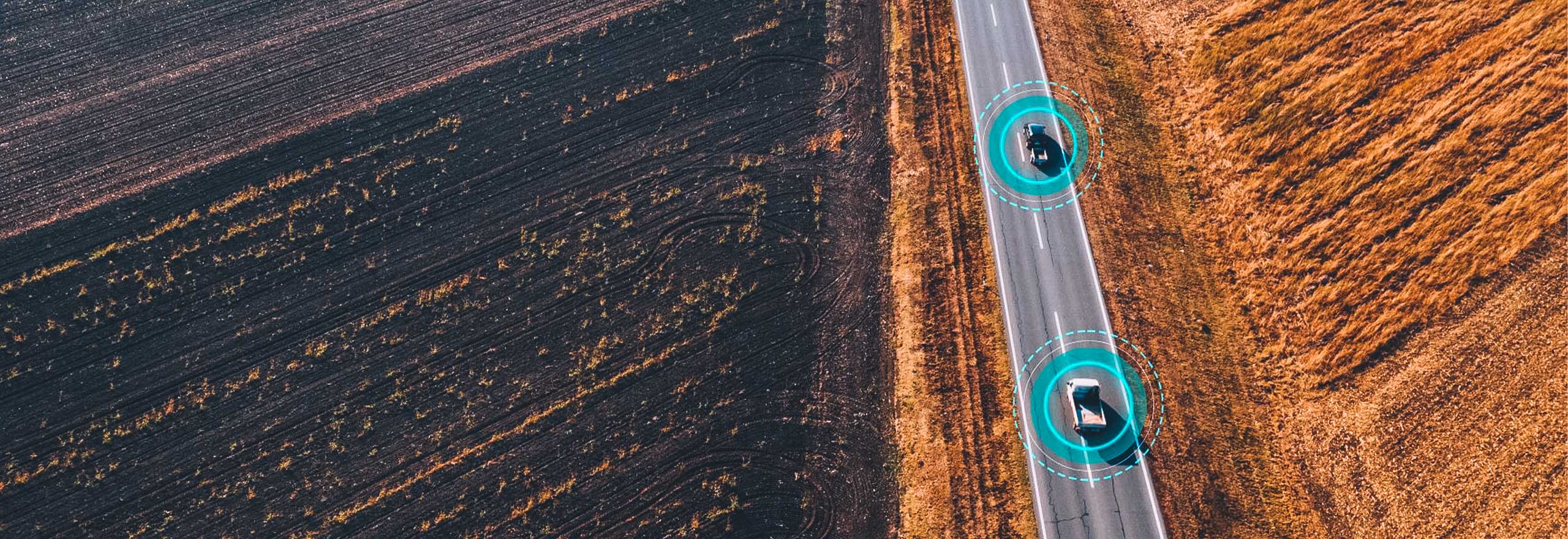

For example, Global Navigation Satellite Systems (GNSS) like the Global Positioning System (GPS) provide measurements that can be used to determine a position on the Earth. Inertial measurements from an Inertial Measurement Unit (IMU) can be used to determine a platform's velocity, acceleration, and attitude – its heading, roll, and pitch. Perception sensors like photogrammetry, cameras, LiDAR and RADAR measure a platform's surroundings, and can be used to identify nearby objects or hazards, including road signs, pedestrians or objects to interact with.

How does sensor fusion enable autonomy?

An autonomous system relies on sensor fusion to understand where it is in the world, what hazards are near, how should it interact with which objects and more. Without sensor fusion algorithms and the perception or positioning technologies supplying data, autonomous applications would not be possible.